Search engines have evolved a lot in the last 20 years. Back during my junior high days, there was no search engine better than AltaVista in my mind. I used it for everything from school projects to finding the latest websites to play games like Candystand’s mini golf. While I knew about Google shortly after its debut, it remained a very distant second for some time.

During the early days of search engines, I also remember finding an annoying number of “Top 50” and “Top 100” lists for site links. Many of these were broken or didn’t work, and prioritized marketing to you over providing information. As if this wasn’t bad enough, you also had to dodge a slew of unrelated websites who stuffed as many keywords as possible into every area of their website so they could rank well inside AND outside their subject matter.

You definitely had your work cut out for you when trying to find something relevant to the subject at hand, and most were frustrated by how long it took to finally arrive at any content of substance.

After a few years of this, Google took their first steps to side with consumers, agreeing that the search process was broken and needed to change.

One of the first major algorithm changes they made put an end to the keyword stuffing that allowed unrelated sites to come up for everything. The scrutiny of thin content, spam, and link farms wasn’t far behind, and big providers using shady tactics were even publicly penalized. A lot has happened since these landmark achievements in Google’s algorithm history – even experts sometimes stumble when trying to separate past from present.

That’s where today’s article comes in. We’re going to cover 5 common mistakes we see with how business websites and other companies handle SEO (search engine optimization) and help you steer clear of these potholes that will leave you stalled out as your competitors race ahead. They include:

- Placing irrelevant content in your meta titles, meta descriptions, and pages

- Setting up duplicate content across websites to manipulate search rankings

- Paying people for fake reviews on your business listings

- Structuring your website without proper organization

- Establishing irrelevant backlinks to your website

Jump ahead to:

Google reads your website like your customers do – stay on topic

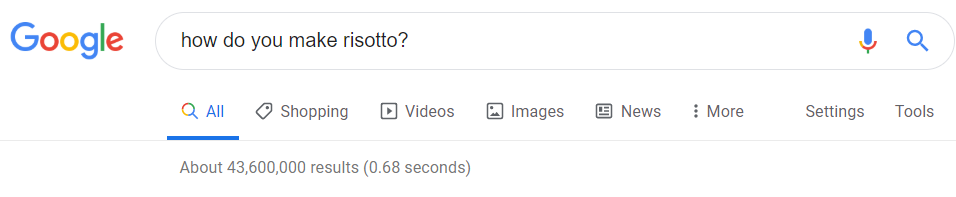

It’s easy to take the current efficiency of search engines for granted. You put in a term, phrase, or question, and Google will deliver multiple results that are tailored to help you find information and answers directly related to your inquiry.

As was mentioned earlier, however, this wasn’t always the case. Since you could stuff any and every keyword that came to mind into your website, performing searches became increasingly annoying as more website administrators abused and gamed this system.

It eventually got to the point where every time you searched for something like a sewing machine, you usually wound up with top linked sites, unrelated content like sports websites, and then if you were lucky, the occasional sewing website.

Keyword stuffing was commonly found in three key areas:

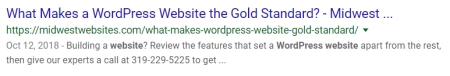

- Meta titles (the blue linked entry of a search result)

- Meta descriptions (the page description appearing as regular text under the meta title)

- Website content (invisible and visible text, footers, links, code, etc. on websites themselves)

Most people know that stuffing keywords into their website hasn’t been a viable strategy for years, but are less informed about the importance these three areas of a website hold today. Since content is one of the most important areas Google watches now, it goes without saying that each of these areas still have key roles to play in rankings and/or a user’s search experience.

Meta titles play a role in both areas and should be crafted with a couple points in mind. The first is the subject matter – choosing titles that uniquely represent the content on their pages and have terms that will be searched is critical when building your website. The second is identification – including your company name or a primary service reinforces who you are and what you do while also helping people find you again on repeat searches.

Google displays up to 600 pixels of width for meta titles in most search results right now, so the maximum number of characters you can use will vary. Fortunately, there are tools you can use to get a rough idea of how your search result will look before you publish it.

Meta descriptions currently have zero bearing on your SEO, so you are better served by using that space to give people a reason to either click the link or contact you directly. Text limits on these have fluctuated multiple times since 2018, and there’s a bit of controversy as to where they currently stand. These limits also appear to vary between desktop and mobile views, so there may be some trial and error in crafting your descriptions.

Don’t spend too much time stressing over these two areas though – at the end of the day, Google will use a title and description it deems best for your page. This may differ slightly or dramatically for both search results and rich snippets, and is usually drawn from your page’s content in these cases.

Naturally, this means your website content is by far the most important of these three areas of interest. Not only is Google adept at automatically reading pages as your visitors do, they also have people perform manual reviews on websites to ensure everything is above board.

Tricking Google just isn’t going to work long-term, and is the single worst thing you can try if you want to stay in their rankings at all. That hasn’t stopped people from trying though, which leads us nicely into our next topics.

Using duplicate content to put one over on Google will leave you with nothing

Duplicate content is frequently paraded as a cardinal sin when discussing SEO. While there is some truth to this, the consequences can vary wildly depending upon the circumstances that are causing it to occur. For the purposes of this discussion, let’s say duplicate content means we’re talking about two sources where over 90% of written or overall page content is the same.

In some cases, it is simply unavoidable. Websites usually have distinct desktop and mobile views these days, and many blogs use AMP (accelerated mobile pages) on mobile sites to improve load times and provide a better mobile browsing experience. Others have distinct, printer-friendly views of pages.

Does using these techniques to aid your visitors mean you’re going to get penalized?

Of course not. Google backed AMP after all, so it would be completely hypocritical for them to punish people using it or similar practices. Generally speaking, Google handles this issue by filtering duplicate entries (we’re talking majority of a page here) out of search results so people only see the one most relevant to them out of a group. You can further aid this process by indicating which page needs to come up where (also known as canonicalization).

What happens when duplicate content starts showing up across multiple domains though?

This answer becomes a bit more complicated depending on whether Google see the action as malicious or not.

Generally speaking, a best case scenario is going to involve Google acknowledging either the original or most authoritative version of established content, and simply not allowing duplicate entries to show up in searches or gain ranking traction.

If you’re handling all sources of duplicate content yourself and clearly aren’t trying to game the system, great! You’ve got nothing to worry about.

Unfortunately, if a large agency is lazy about how they handle website content for businesses in the same industry and market, such as my earlier example with hibu, This sort of situation can get muddy very quickly because of how malicious this looks at a glance and Google’s zero-tolerance policy for how they handle deceptive manipulation of their search results.

For those wondering what that entails, Google removes sites from their search results that break (or appear to break) their Webmaster Guidelines, and won’t reinstate a site until it follows them and goes through a reconsideration process.

While Google does a pretty good job of seeing through problems like these, it is important to remember their system is not foolproof. I’ve seen people achieve short-term success against competitors by weaponizing plagiarism and suffer through false positives for malicious intent that wasn’t there.

Your webmaster/SEO team should always be keeping an eye on your website rankings to ensure you don’t get caught up in this trap by mistake.

Google isn’t going to be fooled by fake business reviews, and it isn’t worth the risk to try

Few things will help you stand apart from your online competition more than positive local reviews. In addition to the SEO boost, you also reap the benefit of prominently showcasing this positive feedback in your Google business listing as prospective customers find it, inspiring trust and giving them a reason to choose you.

As a result, some companies solicit people to leave positive reviews for services they never received. At first glance, it is extremely easy to see why they’d take the time to do this, and you might be wondering why you shouldn’t try it yourself.

My response is simply this: Do you think you are going to convince a company that deals primarily in information that your local deli in Iowa City, IA served 20 people from California, Massachusetts, and Texas when the phones/computers that left those reviews are being cross-checked by IP address and/or GPS in their home states?

No, no you are not.

That doesn’t mean you can’t encourage your customers to leave reviews for you though – we definitely recommend you do so! There’s simply no substitute for good, honest feedback. Just don’t try to solicit random people to pad your numbers. It’s a violation of Google’s terms of use and can land you in legal trouble too.

An organized website is as important to Google as your prospective customers

Have you ever been to a website where the navigation menu takes up three lines, and each button has a submenu? Then each submenu of that submenu also has a submenu. And right when you’re about to click the link you accidentally bump your mouse and have to start pulling up the page you wanted all over again.

It’s harder to find a monstrosity like this nowadays, but when you find a site that was designed that way on purpose, it’s always good for a cringe and a lot of frustration.

Don’t get us wrong – having a lot of relevant content is very good for a website. Keeping it organized is critical for keeping your users’ experience positive and engaging though. Examples of this being done correctly can usually be found in blogs, storefronts, and database-driven content on a regular basis. Even corporate giants like Amazon take the time to break products into categories to make things as easy to find as possible.

Add in the short attention span and impatience of your average Internet surfer and it’s no wonder navigation nightmares are uncommon today. Site visitors expect you to present your content in a manner that is logical and easy to follow. If it isn’t, they’ll leave.

Your URL links should follow a similarly organized structure, as Google takes these into consideration when crawling and ranking. Individual page URLs should clearly signify what the page is about without being too long or with a bunch of random letters, numbers, and symbols attached.

This organization is doubly important for simplified mobile layouts. Nobody is going to want to wade through pages of nonsense to get to what they’re looking for, let alone when some of your website’s bells and whistles are disabled to simplify its loading process.

A good rule of thumb when designing your site and page URLs is to ask yourself “Would I click away from this site if I found it?” If your answer is yes or required any hesitation, expect your customers to feel the same way. As long as you focus on your customers’ experience, you’ll never stray too far from where you need to be here.

The sources of your backlinks are far more important than the links themselves

A backlink is a link from another website that leads to yours. Establishing authoritative backlinks is one of the Holy Grails of SEO, and represents a big boost for you if you can pull it off. The more trust and authority the website has in your given field, the bigger the boon you receive. The better your website content is, the more likely a company or content creator is to take your request seriously.

Needless to say, I was not impressed by their efforts.

Sadly, this step gets overlooked or put on the back burner by a number of SEO providers. I’ve seen some toss up throwaway blogs that link back to you and call it a day, others spam the comments section of every other website they can find in hopes of getting some backlinks to stick, and a few even use dated techniques like link wheels that are considered black hat or actively harmful.

If you don’t know to look out for these pitfalls, it makes the work being done for you sound a lot more impressive than it actually is. Links like these typically hold little to no value for you if they aren’t from reputable, established sources in your field.

While this sounds difficult on paper, reputable links can literally come from any site with trust and authority. Local, statewide, national, or even global websites can be huge boons depending on your market. The more you can claim, the bigger your advantage when Google sizes up your website against the competition.

Don’t use this as an excuse to be stingy with giving out backlinks from your website though – it’s perfectly okay to link to other websites where appropriate, and Google will also see this as one of many opportunities to note improvement in your own authority if you hand them out judiciously.

In search of a helping hand with your SEO services?

Let us step in to help you do some of the heavy lifting! Send us an email on our Contact page or call 319-229-5225 to schedule an appointment where we can talk about your needs and goals today!

Braden is one of the founders of Midwest Websites, and has been professionally writing and developing websites for over 7 years. His blog posts often take an experience from his life and showcase lessons from it to help you maximize online presence for your business.